I was recently in a secret demo run by the Cuda and Polars team. They passed me through a metal detector, put a bag over my head, and drove me to a shack in the woods of rural France. They took my phone, wallet, and passport to ensure I wouldn’t spill the beans before finally showing off what they’ve been working on.

Or, that’s what it felt like. In reality it was a zoom meeting where they politely asked me not to say anything until a specified time, but as a tech writer the mystery had me feeling a little like James Bond.

In this article we’ll discuss the content of that meeting: a new execution engine in Polars that enables GPU accelerated computation, allowing for interactive data manipulation of 100GB+ of data. We’ll discuss what a data frame is in polars, how GPU acceleration works with polars dataframes, and how much of a boost to performance one can expect with the new CUDA powered execution engine.

Who is this useful for? Anyone who works with data and wants to work faster.

How advanced is this post? This post contains simple but cutting-edge data engineering concepts. It’s relevant to readers of all levels.

Pre-requisites: None

Note: At the time of writing I am not affiliated with or endorsed by Polars or Nvidia in any way.

Polars In a Nutshell

In polars you can create and manipulate data frames (which are like super powerful spreadsheets). Here I’m making a simple dataframe consisting of a few people, their age, and the city they live in.

""" Creating a simple dataframe in polars"""import polars as pldf = pl.DataFrame({ "name": ["Alice", "Bob", "Charlie", "Jill", "William"], "age": [25, 30, 35, 22, 40], "city": ["New York", "Los Angeles", "Chicago", "New York", "Chicago"]})print(df)

shape: (5, 3)┌─────────┬─────┬─────────────┐│ name ┆ age ┆ city ││ --- ┆ --- ┆ --- ││ str ┆ i64 ┆ str │╞═════════╪═════╪═════════════╡│ Alice ┆ 25 ┆ New York ││ Bob ┆ 30 ┆ Los Angeles ││ Charlie ┆ 35 ┆ Chicago ││ Jill ┆ 22 ┆ New York ││ William ┆ 40 ┆ Chicago │└─────────┴─────┴─────────────┘

Using this dataframe, you can do things like filter by age,

""" Filtering the previously defined dataframe to only show rows that havean age of over 28"""df_filtered = df.filter(pl.col("age") > 28)print(df_filtered)

shape: (3, 3)┌─────────┬─────┬─────────────┐│ name ┆ age ┆ city ││ --- ┆ --- ┆ --- ││ str ┆ i64 ┆ str │╞═════════╪═════╪═════════════╡│ Bob ┆ 30 ┆ Los Angeles ││ Charlie ┆ 35 ┆ Chicago ││ William ┆ 40 ┆ Chicago │└─────────┴─────┴─────────────┘

you can do math,

""" Creating a new column called "age_doubled" which is double the agecolumn."""df = df.with_columns([ (pl.col("age") * 2).alias("age_doubled")])print(df)

shape: (5, 4)┌─────────┬─────┬─────────────┬─────────────┐│ name ┆ age ┆ city ┆ age_doubled ││ --- ┆ --- ┆ --- ┆ --- ││ str ┆ i64 ┆ str ┆ i64 │╞═════════╪═════╪═════════════╪═════════════╡│ Alice ┆ 25 ┆ New York ┆ 50 ││ Bob ┆ 30 ┆ Los Angeles ┆ 60 ││ Charlie ┆ 35 ┆ Chicago ┆ 70 ││ Jill ┆ 22 ┆ New York ┆ 44 ││ William ┆ 40 ┆ Chicago ┆ 80 │└─────────┴─────┴─────────────┴─────────────┘

and you can perform aggregate functions, like calculating the average age in a city.

""" Calculating the average age by city"""df_aggregated = df.group_by("city").agg(pl.col("age").mean())print(df_aggregated)

shape: (3, 2)┌─────────────┬──────┐│ city ┆ age ││ --- ┆ --- ││ str ┆ f64 │╞═════════════╪══════╡│ Chicago ┆ 37.5 ││ New York ┆ 23.5 ││ Los Angeles ┆ 30.0 │└─────────────┴──────┘

Most people reading this are probably familiar with Pandas, which is Python’s more popular dataframe library. I think, before we get into GPU accelerated polars, it might be useful to explore an important feature which differentiates Polars from Pandas.

Polars LazyFrames

Polars has two fundamental execution modes, “eager” and “lazy”. An eager dataframe does calculations as soon as they are called, exactly as they are called. If you add 2 to every value in a column, then add 3 to every value in that column, each of those operations will execute just like how you would expect when using an eager dataframe. Every value gets 2 added to it, then each of those values gets 3 added to it, right at the exact moment you tell polars those operations should happen.

import polars as pl# Create a DataFrame with a list of numbersdf = pl.DataFrame({ "numbers": [1, 2, 3, 4, 5]})# Add 2 to every number and overwrite the original 'numbers' columndf = df.with_columns( pl.col("numbers") + 2)# Add 3 to the updated 'numbers' columndf = df.with_columns( pl.col("numbers") + 3)print(df)

shape: (5, 1)┌─────────┐│ numbers ││ --- ││ i64 │╞═════════╡│ 6 ││ 7 ││ 8 ││ 9 ││ 10 │└─────────┘

If we instead initialize our dataframe with the .lazy() function, we’ll get a very different output.

import polars as pl# Create a lazy DataFrame with a list of numbersdf = pl.DataFrame({ "numbers": [1, 2, 3, 4, 5]}).lazy() # <-------------------------- Lazy Initialization# Add 2 to every number and overwrite the original 'numbers' columndf = df.with_columns( pl.col("numbers") + 2)# Add 3 to the updated 'numbers' columndf = df.with_columns( pl.col("numbers") + 3)print(df)

naive plan: (run LazyFrame.explain(optimized=True) to see the optimized plan) WITH_COLUMNS: [[(col("numbers")) + (3)]] WITH_COLUMNS: [[(col("numbers")) + (2)]] DF ["numbers"]; PROJECT */1 COLUMNS; SELECTION: "None"

Instead of getting a dataframe, we get an SQL-like expression which outlines what operations need to happen to get the dataframe that we want. We can call .collect() on this to actually run those calculations and get our dataframe.

print(df.collect())

shape: (5, 1)┌─────────┐│ numbers ││ --- ││ i64 │╞═════════╡│ 6 ││ 7 ││ 8 ││ 9 ││ 10 │└─────────┘

At first blush this might not seem useful: Who cares if we do all our calculations at the end versus throughout the code? Noone, really. The benefit of this system isn’t when calculations happen, it’s what calculations happen.

Before executing a lazy dataframe, Polars looks at the operations that have stacked up and figures out any shortcuts that could result in faster execution. This process, generally, is called “query optimization”. For instance, if we whip up a lazy dataframe then run some operations on this data we’ll get some SQL expression

# Create a DataFrame with a list of numbersdf = pl.DataFrame({ "col_0": [1, 2, 3, 4, 5], "col_1": [8, 7, 6, 5, 4], "col_2": [-1, -2, -3, -4, -5]}).lazy()#doing some random operationsdf = df.filter(pl.col("col_0") > 0)df = df.with_columns((pl.col("col_1") * 2).alias("col_1_double"))df = df.group_by("col_2").agg(pl.sum("col_1_double"))print(df)

naive plan: (run LazyFrame.explain(optimized=True) to see the optimized plan)AGGREGATE [col("col_1_double").sum()] BY [col("col_2")] FROM WITH_COLUMNS: [[(col("col_1")) * (2)].alias("col_1_double")] FILTER [(col("col_0")) > (0)] FROM DF ["col_0", "col_1", "col_2"]; PROJECT */3 COLUMNS; SELECTION: "None"

But if we run .explain(optimized=True) on that dataframe we’ll get a different expression, which is what polars has deemed to be a more optimal way of doing the same thing.

print(df.explain(optimized=True))

AGGREGATE [col("col_1_double").sum()] BY [col("col_2")] FROM WITH_COLUMNS: [[(col("col_1")) * (2)].alias("col_1_double")] DF ["col_0", "col_1", "col_2"]; PROJECT */3 COLUMNS; SELECTION: "[(col(\"col_0\")) > (0)]"

This optimized expression is what actually gets run when you call .collect() on a lazy dataframe.

This isn’t just fancy and fun, it can result in some pretty serious performance increases. Here I’m running the same operations on two identical dataframes, one which is eager and one which is lazy. I’m averaging the execution time over 10 runs and calculating the average speed difference.

"""Performing the same operations on the same data between two dataframes,one with eager execution and one with lazy execution, and calculating thedifference in execution speed."""import polars as plimport numpy as npimport time# Specifying constantsnum_rows = 20_000_000 # 20 million rowsnum_cols = 10 # 10 columnsn = 10 # Number of times to repeat the test# Generate random datanp.random.seed(0) # Set seed for reproducibilitydata = {f"col_{i}": np.random.randn(num_rows) for i in range(num_cols)}# Define a function that works for both lazy and eager DataFramesdef apply_transformations(df): df = df.filter(pl.col("col_0") > 0) # Filter rows where col_0 is greater than 0 df = df.with_columns((pl.col("col_1") * 2).alias("col_1_double")) # Double col_1 df = df.group_by("col_2").agg(pl.sum("col_1_double")) # Group by col_2 and aggregate return df# Variables to store total durations for eager and lazy executiontotal_eager_duration = 0total_lazy_duration = 0# Perform the test n timesfor i in range(n): print(f"Run {i+1}/{n}") # Create fresh DataFrames for each run (polars operations can be in-place, so ensure clean DF) df1 = pl.DataFrame(data) df2 = pl.DataFrame(data).lazy() # Measure eager execution time start_time_eager = time.time() eager_result = apply_transformations(df1) # Eager execution eager_duration = time.time() - start_time_eager total_eager_duration += eager_duration print(f"Eager execution time: {eager_duration:.2f} seconds") # Measure lazy execution time start_time_lazy = time.time() lazy_result = apply_transformations(df2).collect() # Lazy execution lazy_duration = time.time() - start_time_lazy total_lazy_duration += lazy_duration print(f"Lazy execution time: {lazy_duration:.2f} seconds")# Calculating the average execution timeaverage_eager_duration = total_eager_duration / naverage_lazy_duration = total_lazy_duration / n#calculating how much faster lazy execution wasfaster = (average_eager_duration-average_lazy_duration)/average_eager_duration*100print(f"\nAverage eager execution time over {n} runs: {average_eager_duration:.2f} seconds")print(f"Average lazy execution time over {n} runs: {average_lazy_duration:.2f} seconds")print(f"Lazy took {faster:.2f}% less time")

Run 1/10Eager execution time: 3.07 secondsLazy execution time: 2.70 secondsRun 2/10Eager execution time: 4.17 secondsLazy execution time: 2.69 secondsRun 3/10Eager execution time: 2.97 secondsLazy execution time: 2.76 secondsRun 4/10Eager execution time: 4.21 secondsLazy execution time: 2.74 secondsRun 5/10Eager execution time: 2.97 secondsLazy execution time: 2.77 secondsRun 6/10Eager execution time: 4.12 secondsLazy execution time: 2.80 secondsRun 7/10Eager execution time: 3.00 secondsLazy execution time: 2.72 secondsRun 8/10Eager execution time: 4.53 secondsLazy execution time: 2.76 secondsRun 9/10Eager execution time: 3.14 secondsLazy execution time: 3.08 secondsRun 10/10Eager execution time: 4.26 secondsLazy execution time: 2.77 secondsAverage eager execution time over 10 runs: 3.64 secondsAverage lazy execution time over 10 runs: 2.78 secondsLazy took 23.75% less time

A 23.75% performance increase is nothing to sneeze at, and is enabled by lazy execution (which is not present in Pandas). Under the covers, when you use a polars lazy dataframe you’re essentially defining a high level computational graph, which polars takes and does all sorts of fancy magic to. After it optimizes that query it then executes it, meaning you get the same results you would have gotten with an eager dataframe, but usually faster.

Personally, I’m a Pandas guy, and haven’t really seen a big reason to make the switch. I figured “It might be better, but it’s probably not better enough for me to switch one of my most fundamental tools”. If that sounds like you, and 23.75% doesn’t get your eyebrows ticked, boy do I have some results for you.

Introducing Polars with GPU Execution

The functionality for this is hot off the press, so I’m not 100% sure how you will be able to leverage GPU acceleration in your environment. At the time of writing I was given a wheel file (which is like a local library you can install). I’m under the impression that, to get rocking in rolling on your machine after this article has been released, you can use the following command to install polars with GPU acceleration on your machine.

pip install polars[gpu] --extra-index-url=https://pypi.nvidia.com

I also expect there might be some documentation on the polars pypi page if that doesn’t work. Regardless, once your up and running, you can start using the power of polars on your GPU. The only thing you have to do to your code is specify the GPU as the engine when you collect a lazy dataframe.

On top of comparing eager and lazy execution from the previous test, let’s also compare lazy execution with the GPU engine. We can do that with the line results = df.collect(engine=gpu_engine) where gpu_engine is specified based on the following:

gpu_engine = pl.GPUEngine( device=0, # This is the default raise_on_fail=True, # Fail loudly if we can't run on the GPU. )

The GPU execution engine doesn’t support all polars functionality, and will fall back to the CPU by default. By setting raise_on_fail=True we’re specifying that we want the code to raise an exception if GPU execution is not supported. Ok, here’s the actual code.

"""Performing the same operations on the same data between three dataframes,one with eager execution, one with lazy execution, and one with lazy executionand GPU acceleration. Calculating the difference in execution speed between thethree."""import polars as plimport numpy as npimport time# Creating a large random DataFramenum_rows = 20_000_000 # 20 million rowsnum_cols = 10 # 10 columnsn = 10 # Number of times to repeat the test# Generate random datanp.random.seed(0) # Set seed for reproducibilitydata = {f"col_{i}": np.random.randn(num_rows) for i in range(num_cols)}# Defining a function that works for both lazy and eager DataFramesdef apply_transformations(df): df = df.filter(pl.col("col_0") > 0) # Filter rows where col_0 is greater than 0 df = df.with_columns((pl.col("col_1") * 2).alias("col_1_double")) # Double col_1 df = df.group_by("col_2").agg(pl.sum("col_1_double")) # Group by col_2 and aggregate return df# Variables to store total durations for eager and lazy executiontotal_eager_duration = 0total_lazy_duration = 0total_lazy_GPU_duration = 0# Performing the test n timesfor i in range(n): print(f"Run {i+1}/{n}") # Create fresh DataFrames for each run (polars operations can be in-place, so ensure clean DF) df1 = pl.DataFrame(data) df2 = pl.DataFrame(data).lazy() df2 = pl.DataFrame(data).lazy() # Measure eager execution time start_time_eager = time.time() eager_result = apply_transformations(df1) # Eager execution eager_duration = time.time() - start_time_eager total_eager_duration += eager_duration print(f"Eager execution time: {eager_duration:.2f} seconds") # Measure lazy execution time start_time_lazy = time.time() lazy_result = apply_transformations(df2).collect() # Lazy execution lazy_duration = time.time() - start_time_lazy total_lazy_duration += lazy_duration print(f"Lazy execution time: {lazy_duration:.2f} seconds") # Defining GPU Engine gpu_engine = pl.GPUEngine( device=0, # This is the default raise_on_fail=True, # Fail loudly if we can't run on the GPU. ) # Measure lazy execution time start_time_lazy_GPU = time.time() lazy_result = apply_transformations(df2).collect(engine=gpu_engine) # Lazy execution with GPU lazy_GPU_duration = time.time() - start_time_lazy_GPU total_lazy_GPU_duration += lazy_GPU_duration print(f"Lazy execution time: {lazy_GPU_duration:.2f} seconds")# Calculating the average execution timeaverage_eager_duration = total_eager_duration / naverage_lazy_duration = total_lazy_duration / naverage_lazy_GPU_duration = total_lazy_GPU_duration / n#calculating how much faster lazy execution wasfaster_1 = (average_eager_duration-average_lazy_duration)/average_eager_duration*100faster_2 = (average_lazy_duration-average_lazy_GPU_duration)/average_lazy_duration*100faster_3 = (average_eager_duration-average_lazy_GPU_duration)/average_eager_duration*100print(f"\nAverage eager execution time over {n} runs: {average_eager_duration:.2f} seconds")print(f"Average lazy execution time over {n} runs: {average_lazy_duration:.2f} seconds")print(f"Average lazy execution time over {n} runs: {average_lazy_GPU_duration:.2f} seconds")print(f"Lazy was {faster_1:.2f}% faster than eager")print(f"GPU was {faster_2:.2f}% faster than CPU Lazy and {faster_3:.2f}% faster than CPU eager")

Run 1/10Eager execution time: 0.74 secondsLazy execution time: 0.66 secondsLazy execution time: 0.17 secondsRun 2/10Eager execution time: 0.72 secondsLazy execution time: 0.65 secondsLazy execution time: 0.17 secondsRun 3/10Eager execution time: 0.82 secondsLazy execution time: 0.76 secondsLazy execution time: 0.17 secondsRun 4/10Eager execution time: 0.81 secondsLazy execution time: 0.69 secondsLazy execution time: 0.18 secondsRun 5/10Eager execution time: 0.79 secondsLazy execution time: 0.66 secondsLazy execution time: 0.18 secondsRun 6/10Eager execution time: 0.75 secondsLazy execution time: 0.63 secondsLazy execution time: 0.18 secondsRun 7/10Eager execution time: 0.77 secondsLazy execution time: 0.72 secondsLazy execution time: 0.18 secondsRun 8/10Eager execution time: 0.77 secondsLazy execution time: 0.72 secondsLazy execution time: 0.17 secondsRun 9/10Eager execution time: 0.77 secondsLazy execution time: 0.72 secondsLazy execution time: 0.17 secondsRun 10/10Eager execution time: 0.77 secondsLazy execution time: 0.70 secondsLazy execution time: 0.17 secondsAverage eager execution time over 10 runs: 0.77 secondsAverage lazy execution time over 10 runs: 0.69 secondsAverage lazy execution time over 10 runs: 0.17 secondsLazy was 10.30% faster than eagerGPU was 74.78% faster than CPU Lazy and 77.38% faster than CPU eager

(Note: this is a similar test as the previous test, but on a different, larger machine. Thus, the execution times are different from the previous test)

Yep. 74.78% faster . And this isn’t even a particularly large dataset. One might expect larger performance increases for larger datasets.

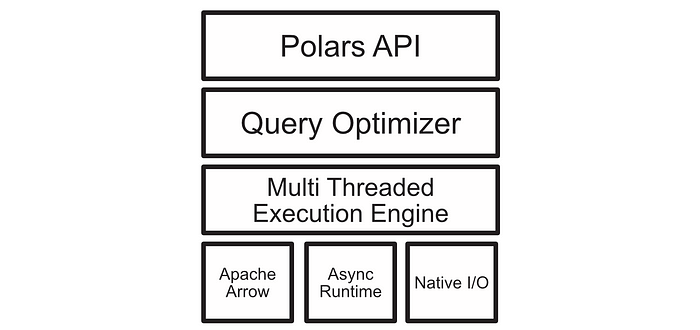

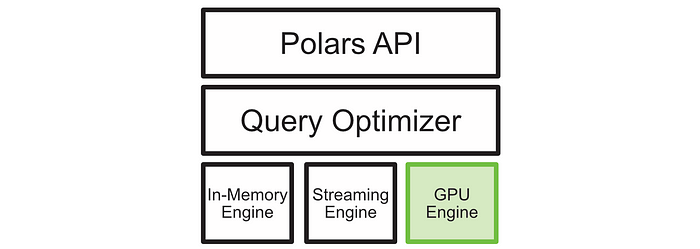

Unfortunately, I can’t share the presentation provided by the Nvidia and Polars team, but I can describe what I understand to be happening under the hood. Basically, Polars has a few execution engines it uses for a variety of tasks, and they basically just tacked on another one with GPU support.

As far as I understand, these engines are called on the fly, both based on the hardware available and the query being executed. Some queries are highly parallelizable and do exceedingly well on the GPU, while less parallelizable operations might be done with the In-Memory engine on the CPU. This, in theory, makes CUDA accelerated polars almost always faster, which I have found to be anecdotally true, with especially large speedups on larger datasets.

Abstracted Memory Management

One of the key ideas the Nvidia team presented was that the new query optimizer was clever enough to handle memory management across the CPU and GPU. For those of you that don’t have a lot of GPU programming experience, the CPU and GPU have different memory, with the CPU utilizing RAM to store information and the GPU utilizing vRAM which is housed on the GPU itself.

One can imagine a scenario where a polars dataframe is created and executed on with the GPU execution engine. Then, one can imagine an operation which requires that dataframe to interact with another dataframe which is still on the CPU. The polars query optimizer is capable of understanding and handling this discrepancy by passing data between the CPU and GPU as needed.

To me this is a tremendous asset with a thorny inconvenience. When you’re doing big and heavy workloads (building AI models for instance) on the GPU, it’s often important to manage memory consumption fairly strictly. I imagine workflows featuring large models which take up a substantial portion of the GPU might have issues accommodating polars essentially doing whatever it wants with your data. I noticed that the GPU execution engine seems to be directed to a single GPU, so maybe machines with more than one GPU can isolate memory more strictly.

Regardless though, I think this is of little practical concern for most people. Generally speaking when doing data science stuff you start with raw data, do a bunch of data manipulation, then save artifacts which are designed to be model-ready. I struggle to think of a use case where you have to do big data manipulations and modeling jobs on the same machine at the same time. If that does seem like a big problem to you, though, the Nvidia and Polars team is currently investigating explicit memory control, which may be present in future releases. For pure data manipulation workloads, I imagine the automatic handling of RAM and vRAM will be a tremendous time saver to many data scientists and engineers out there.

Conclusion

Exciting stuff, and hot off the press at that. Normally I spend weeks reviewing topics that have been established for months if not years, so a “pre-release” is a bit new for me.

Frankly, I have no idea how impactful this will be on my general workflow. I’ll likely still be rocking pandas for a lot of my cowboy coding in google colab, simply because I’m comfortable with it, but when faced with big dataframes and computationally expensive queries I think I’ll find myself reaching for Polars more often, and I expect to eventually integrate it into a core part of my workflow. A big reason for that is the astronomical speed increase of GPU accelerated Polars over virtually any other dataframe tooling.

Join Intuitively and Exhaustively Explained

At IAEE you can find:

Long form content, like the article you just read

Thought pieces, based on my experience as a data scientist, engineering director, and entrepreneur

A discord community focused on learning AI

Regular Lectures and office hours